Model-Based Systems Engineering (MBSE) is evolving, and a key question arises: Will MBSE benefit from textual notation? The answer is a resounding yes, but it is not the whole story. Graphical representations and much more are still needed. Here’s why.

Textual Notation as a Complementary Tool

Textual notation is already a fundamental aspect of many basic modeling tools. Languages like PlantUML and Mermaid derive graphical representations from textual descriptions, making it easier for engineers to visualize their models. Similarly, domain-specific languages (DSLs) like Franca IDL being integrated into modeling tools like MagicDraw have proven effective. These tools allow users to either code or draw, integrating seamlessly with other model content, providing flexibility and ease of use.

The Emergence of Two-Way Solutions

Advanced tools are taking this integration further. For instance, sophisticated tools like MagicDraw now offer two-way translations between SysML v2 textual notation and graphical representations. This functionality allows for editing on both sides, akin to how markdown plugins work in VSCode. Such advancements are critical as they cater to both textual and graphical preferences, ensuring broader acceptance and usability.

Bridging the Gap Between Coders and Modelers

Textual notations are particularly appealing to those who are closer to coding. For coders, the familiarity of textual input can significantly lower the barrier to entry into the modeling world. Integrating textual notations into Integrated Development Environments (IDEs) where coding happens can streamline workflows and enhance productivity.

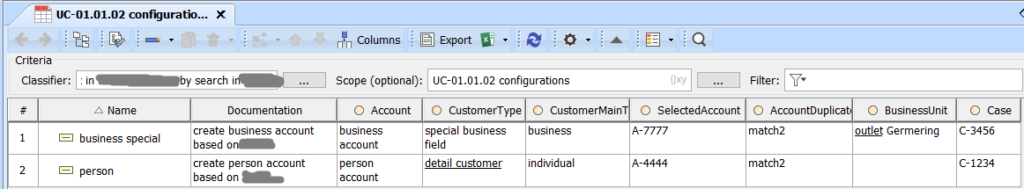

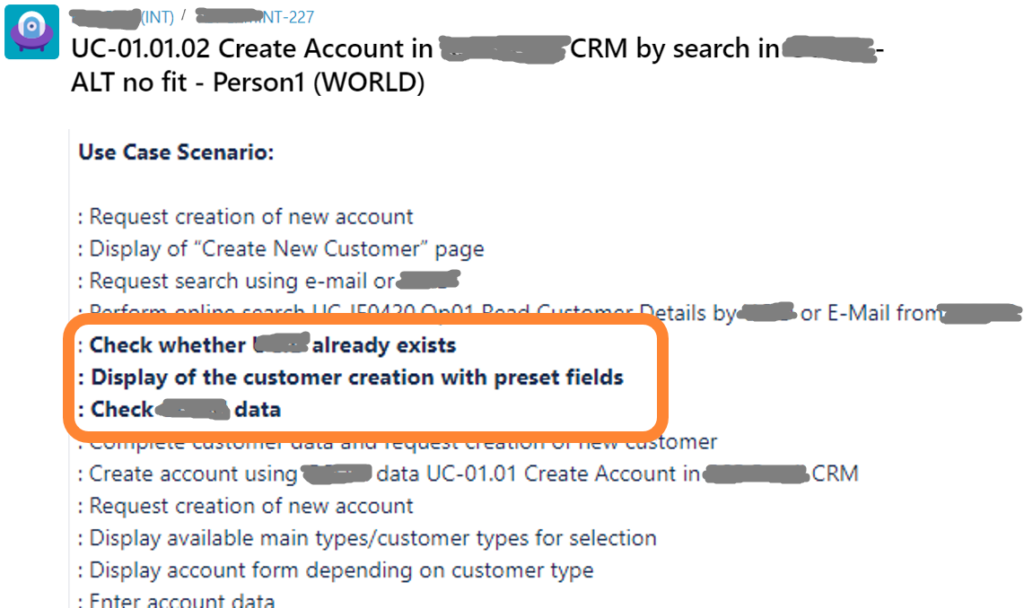

However, for managers, architects, designers, and analysts, graphical representations, output management, and comprehensive lists consistent with models are crucial. Therefore, to effectively engage both technical and non-technical stakeholders, providing a combination of textual notations and corresponding graphical representations is essential.

Addressing Broader Needs

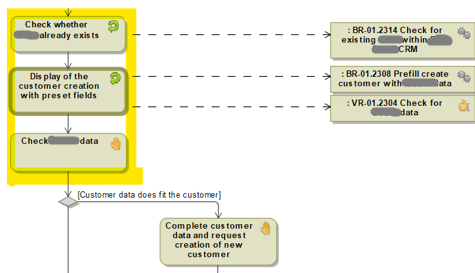

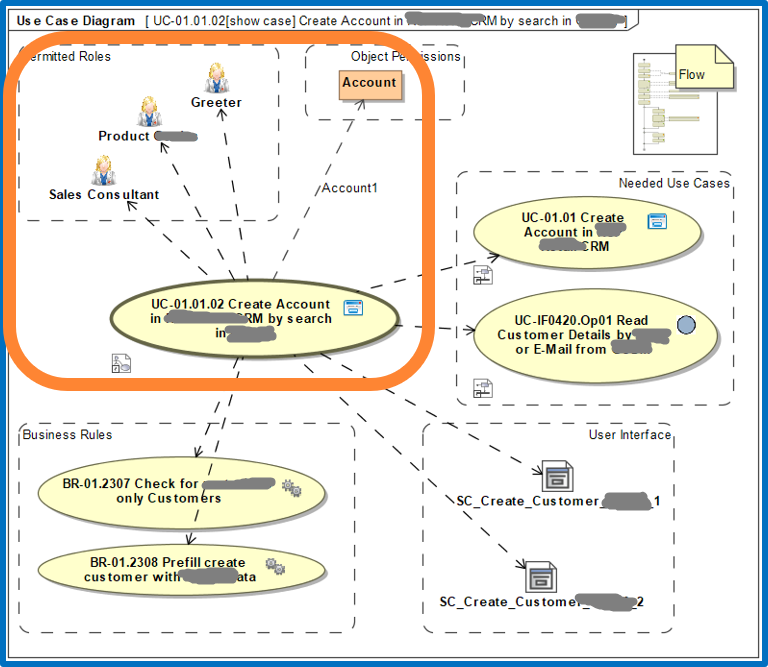

Reflecting on over a decade of experience in automotive projects, it is clear that MBSE must address a range of needs to be truly successful and widely accepted. Models must encompass various aspects such as cross-product, cross-solution, product solutions, functional modeling, system architecture, software architecture, and boardnet modeling.

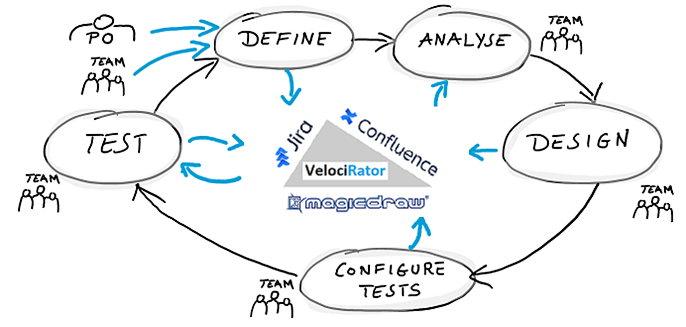

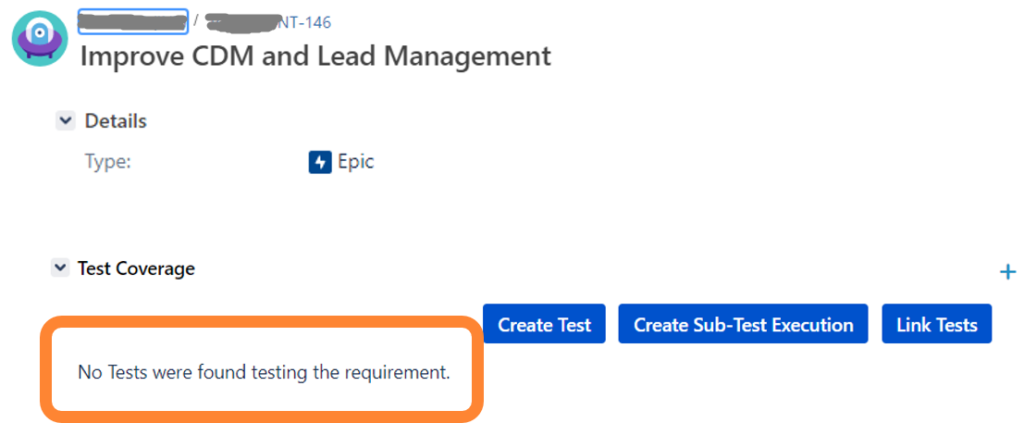

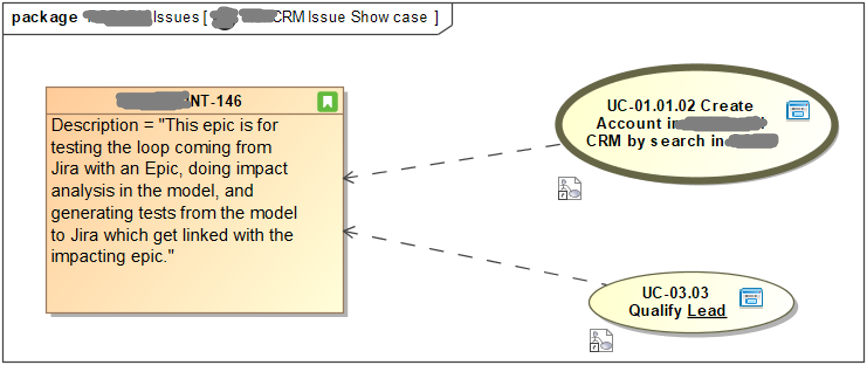

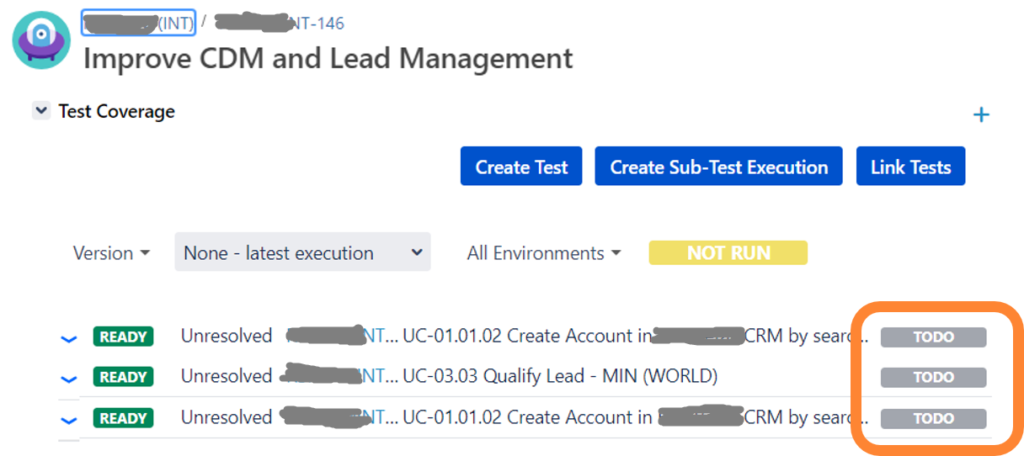

Additionally, the existing model content in UML and SysML v1 predominantly features graphical representations. Transitioning to textual notations won’t happen overnight. The need for tool integration is paramount. The enterprise environment is a complex network of tools for planning, requirements, system modeling, simulation, test management, and implementation. Tool content is often replicated between neighboring tools or linked, necessitating seamless integration.

Machine-Readable Models and Analytics

Models that are not machine-readable are essentially ineffective, serving only as “marketing” diagrams. Ensuring that models are machine-readable and providing model analytics, such as making model content available to tools like Tableau, is highly valued by business users.

Conclusion

In conclusion, textual representation like SysML v2 is foundational and will significantly benefit MBSE. However, it must complement diagramming and address other critical needs to be truly effective. The standards are in place, and now it’s time for the tool business to catch up. By embracing both textual and graphical representations, and addressing the diverse needs of stakeholders, MBSE can achieve greater acceptance and success.